Most DCC guidance assumes the reader has an office with a perimeter firewall and a fleet of laptops. For modern cloud-native and SaaS suppliers that model does not apply. The perimeter is someone else's (the cloud provider's), the fleet is a mix of personal laptops and ephemeral containers, and "the office" is Slack. DCC still applies, and the scheme has specific expectations for cloud-native suppliers - but the evidence looks fundamentally different from the traditional audit template.

This guide walks through how to scope DCC for a cloud-native supplier, what evidence the assessor is actually looking for in a cloud context, and how platform gap analysis materially reduces the engagement effort at L1.

The cloud-native supplier pattern

For this guide a "cloud-native" supplier is one with:

- No on-premise infrastructure (or a trivial amount).

- Core business infrastructure hosted entirely on AWS, Azure, or GCP.

- SaaS-based operational tooling (Microsoft 365 or Google Workspace, Slack, GitHub, Jira, a CI/CD platform, a customer data platform).

- Distributed workforce, BYOD or company-issued devices managed by MDM.

- Customer-facing services delivered via cloud-hosted APIs or web applications.

This pattern covers modern consultancies, analytics firms, software product companies, data science teams, and many mid-sized specialist defence suppliers.

Scoping a cloud-native DCC engagement

The first and most important scoping decision is: what are the in-scope systems?

The common mistake is to define the scope too narrowly ("only the MOD project Slack channel and the MOD project GitHub repo"). Assessors rarely accept very narrow scopes because MOD data rarely stays contained - it flows into the engineer's laptop, into the code that processes it, into the logs the code writes, into the backup of those logs, into the SSO platform authenticating the engineer, into the CI/CD pipeline that built the code. All of these are legitimately in the attack surface.

A defensible scope for a cloud-native supplier typically covers:

- Identity and access platform (Microsoft Entra ID / Azure AD, Google Workspace, Okta).

- Operational platforms (Microsoft 365 or Google Workspace for email and document storage).

- Development infrastructure (GitHub or equivalent, CI/CD pipelines).

- Production infrastructure hosting any MOD-relevant service or data.

- Communication platforms (Slack, Teams) insofar as MOD data flows through them.

- Endpoint devices used by personnel with MOD data access.

The supplier register covers the SaaS providers underneath this scope: Microsoft or Google, the cloud provider(s), GitHub, Slack, the observability platform, the CI/CD tooling.

Evidence for the five technical controls in a cloud-native context

Firewalls. Cloud-native suppliers evidence this via cloud security groups, VPC configurations, and WAF rules - not a perimeter firewall. Evidence: current security group configurations exported from AWS/Azure, documented ruleset justifications, evidence of periodic review. A cloud security group with 0.0.0.0/0 on SSH or RDP is a mandatory finding.

Secure configuration. Evidence: infrastructure-as-code repositories (Terraform, CloudFormation, Bicep) showing secure baseline configurations, CIS benchmark adherence for compute resources, CIS or CSA STAR for the cloud provider, secure container base images if using containers, documented secure baseline for SaaS administrative settings (M365, Google Workspace, GitHub).

Security update management. Evidence: cloud-native patch cadence for OS images, base container image rebuild cadence, SaaS provider uptime and patching (the provider's responsibility, evidenced by their certifications), evidence that your own code dependencies are updated regularly (Dependabot or equivalent reports, SBOM generation).

User access control. Evidence: SSO configuration with MFA enforcement, conditional access policies, IAM audit evidence showing principle of least privilege, periodic access review evidence, privileged access separation (admin accounts separate from day-to-day accounts), break-glass account handling.

Malware protection. Evidence: MDM policy showing AV/EDR enforcement on endpoints, mail gateway evidence (attachment scanning, link protection), where applicable WAF evidence for public-facing services. For fully managed SaaS (like Microsoft 365) the provider's attestations cover a substantial portion.

The shared responsibility model

Cloud-native suppliers must be able to articulate clearly what they are responsible for versus what the cloud or SaaS provider is responsible for. This is the "shared responsibility model" conversation, and assessors test it.

For AWS, Azure, and GCP the provider is responsible for the physical data centre, the hypervisor, the underlying network, and the availability of the service. The customer is responsible for configuration of the service they use - how IAM is set up, how security groups are defined, how data is encrypted, how logs are captured, how access is managed.

For SaaS providers like Microsoft 365 the provider is responsible for the application availability, the underlying infrastructure, and significant configuration security. The customer is responsible for tenant-level configuration (conditional access, MFA, data loss prevention, retention policies), user identity, and the security of data the customer chooses to put in the service.

Evidence that this model is understood: a documented shared responsibility matrix covering each cloud and SaaS provider the supplier uses, mapping which side is responsible for each control area. A short document (often 2-3 pages) is sufficient.

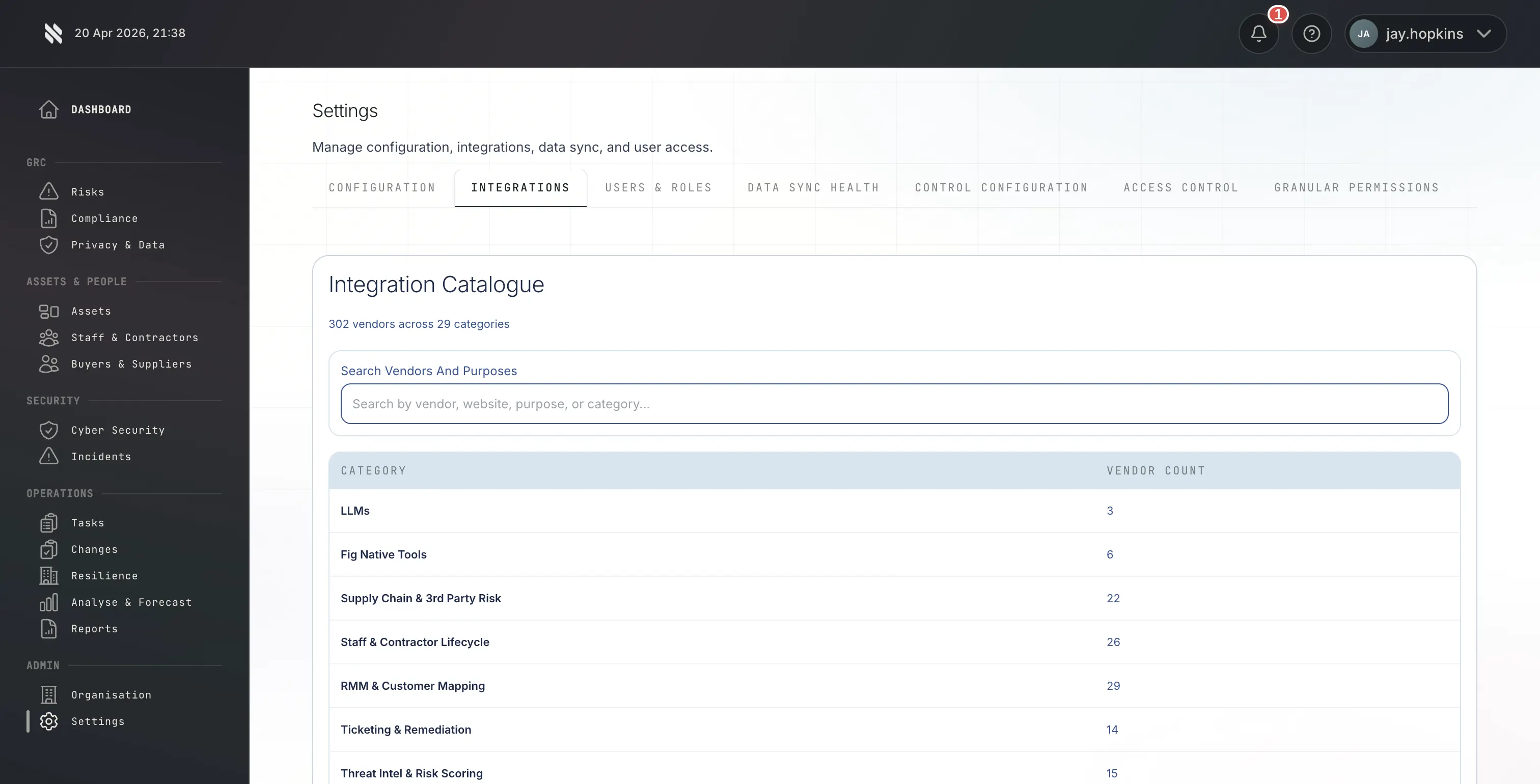

For L1 engagements Fig runs its technology platform against the supplier's in-scope cloud infrastructure and SaaS configuration. The platform performs automated gap analysis covering:

- Cloud infrastructure misconfigurations - open security groups, missing encryption, unused IAM roles, overly permissive policies.

- Identity platform posture - MFA coverage, conditional access gaps, dormant accounts, privileged account separation.

- Endpoint coverage - enrolment gaps, compliance status.

- Exposure - public-facing services, known CVEs, subdomain inventory, public DNS hygiene.

- Supply chain indicators - SaaS provider certification, subprocessor transparency, open-source dependency health.

This is a material differentiator versus pure audit-based Certification Bodies, because cloud-native suppliers typically have a long tail of minor misconfigurations that would otherwise surface as findings during formal assessment. Running the platform first means those findings are remediated quietly before the assessor ever looks at the submission.

The platform is worth approximately £4,200 per annum as a standalone subscription and is included in Fig's L1 fee for the duration of the engagement.

Evidence for governance in a cloud-native context

The governance artefacts - Information Security Policy, Incident Response Plan, ISMS - look structurally the same in a cloud-native context, but the content is different. Cloud-native-specific considerations:

Information Security Policy. Must address remote working, BYOD, cloud platform usage, SaaS tool usage, and acceptable use of personal accounts for work purposes.

Incident Response Plan. Must address cloud provider incident notifications (how you are notified if AWS or Microsoft has an incident affecting you), SaaS provider incidents (data breach notification from a third party you use), code supply chain incidents (compromise of an open-source dependency or CI/CD tool).

Data handling policy. Must address where customer data can be stored, cross-border transfers, retention and destruction in cloud contexts, encryption at rest and in transit.

Access control policy. Must reflect SSO and federation, not traditional on-premise AD.

Change management. Must reflect CI/CD, infrastructure-as-code, feature flags - not a traditional CAB.

Common findings for cloud-native suppliers

Unclear shared responsibility. The supplier claims to rely on "AWS security" without being able to articulate what AWS is and is not responsible for in their specific architecture.

Overly permissive IAM. Broad-use service accounts, long-lived access keys, lack of role separation between production and development.

SaaS tool sprawl. Dozens of SaaS tools in use across the organisation, many onboarded without security review, with no documented register.

Public GitHub repositories leaking secrets. Surprisingly common and a rapid disqualifier at assessment.

No evidence of access review. Cloud-native suppliers often use SSO excellently but never periodically review who has what access.

Missing MFA on privileged accounts. Particularly breakglass or root accounts for cloud providers.

Log retention gaps. MOD data handling typically requires logs to be retained for 12 months minimum; cloud-native defaults are often 30-90 days unless explicitly configured otherwise.

Proportionality for small cloud-native suppliers

The scheme is proportionate. A four-person AI consultancy hosting client work on AWS does not need an enterprise-grade ISMS. What is expected:

- Clear articulation of the cloud stack used.

- Documented shared responsibility matrix (2-3 pages).

- Infrastructure-as-code repositories showing how the environment is built.

- SSO with MFA for the team.

- Documented access review (quarterly is fine).

- Supplier register covering the ~10 SaaS tools in use.

- Incident response plan that actually references cloud provider notification channels.

That evidence pack satisfies L0 or L1 at small cloud-native scale.

The practical path

Cloud-native suppliers benefit disproportionately from an L1 engagement with a technology-platform-equipped Certification Body, because the platform surfaces the long tail of configuration findings before formal assessment. For L0 engagements the content is more documentary but the principles above still apply - cloud-specific scoping and evidence rather than applying a traditional office-network template.

Talk to a DCC assessor → | See L1 pricing →